On This Page

Defining Integer Binary Length

The Mathematical Formula

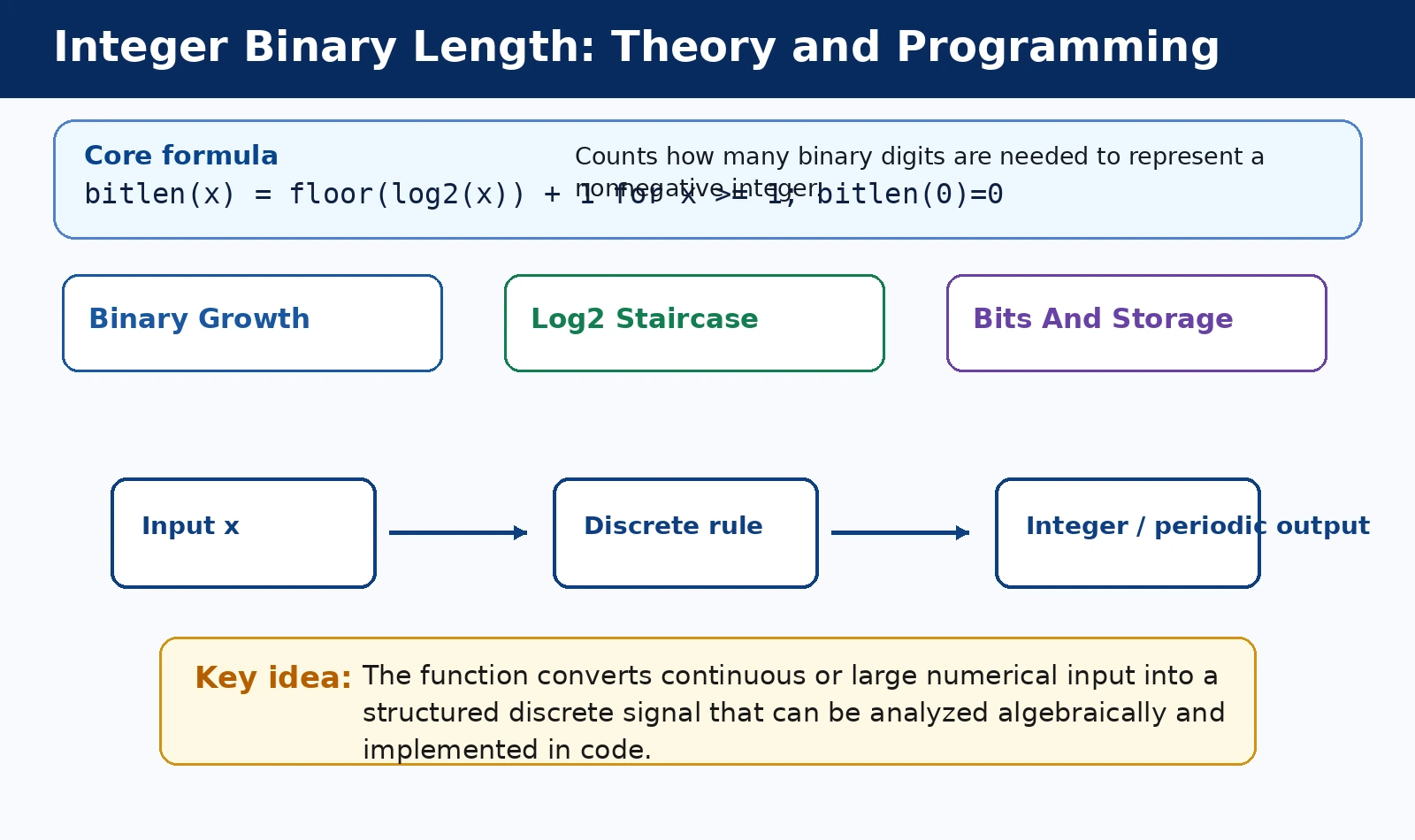

The integer binary length represents the total count of digits in a number's binary string. For any positive integer ##x##, the length is calculated using the base-2 logarithm. This mathematical approach provides a precise way to quantify bit requirements.

The core formula is expressed as

. Here, the logarithm identifies the power of two that the number reaches. By applying the floor function, we normalize the value to the nearest lower integer.

Adding one to the floor value ensures we account for the most significant bit. Without this increment, the formula would only represent the exponent rather than the total count. This adjustment is vital for accurate representation in digital systems.

In practical terms, this formula maps a decimal value to its binary footprint. It allows engineers to predict storage needs without performing full conversions. Consequently, it serves as a bridge between continuous mathematics and discrete digital logic structures.

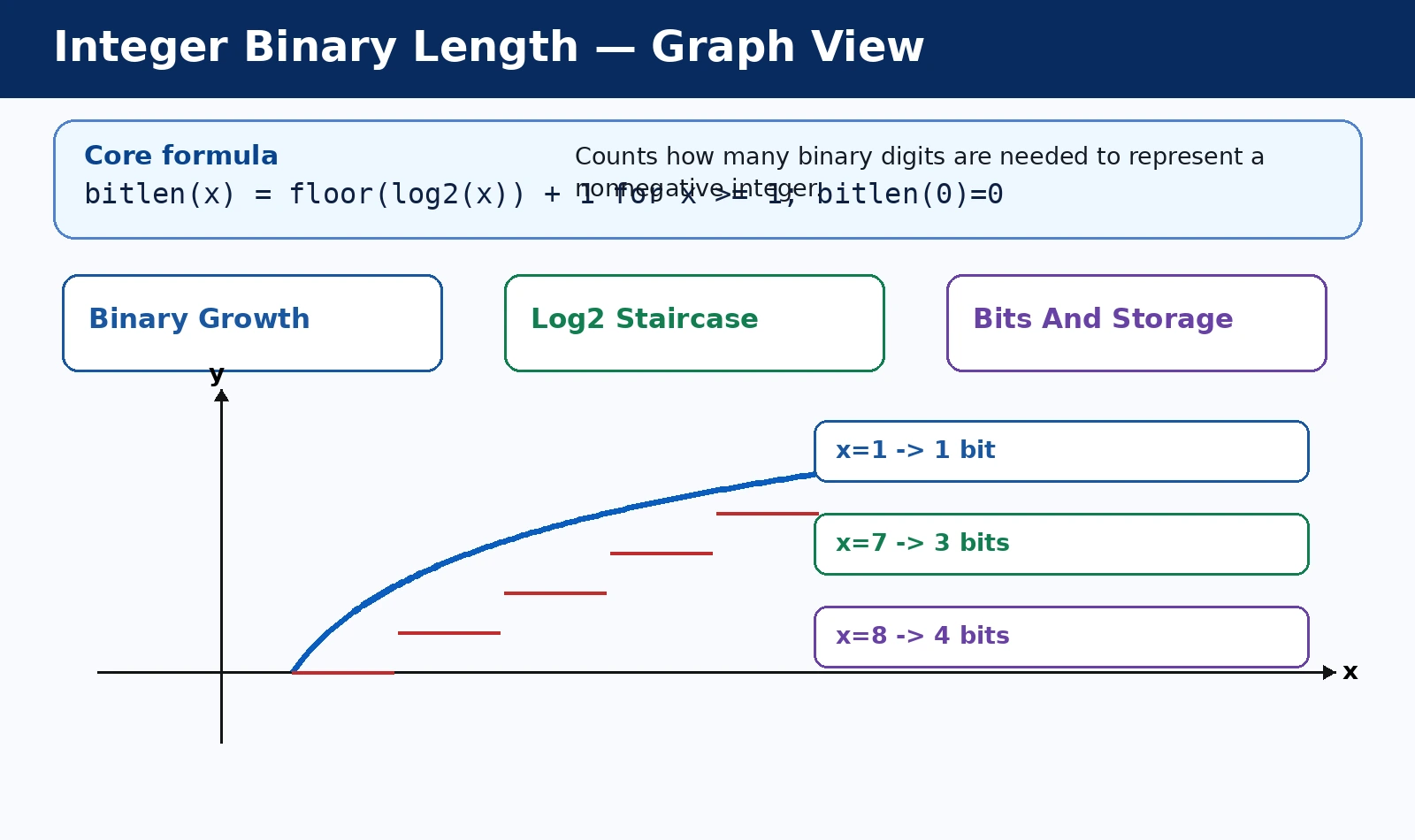

Consider how integers grow exponentially in binary representation as their values increase. Each time a number doubles, its binary length increases by exactly one bit. This logarithmic growth is why binary systems are so efficient for storing data.

Handling the Zero Case

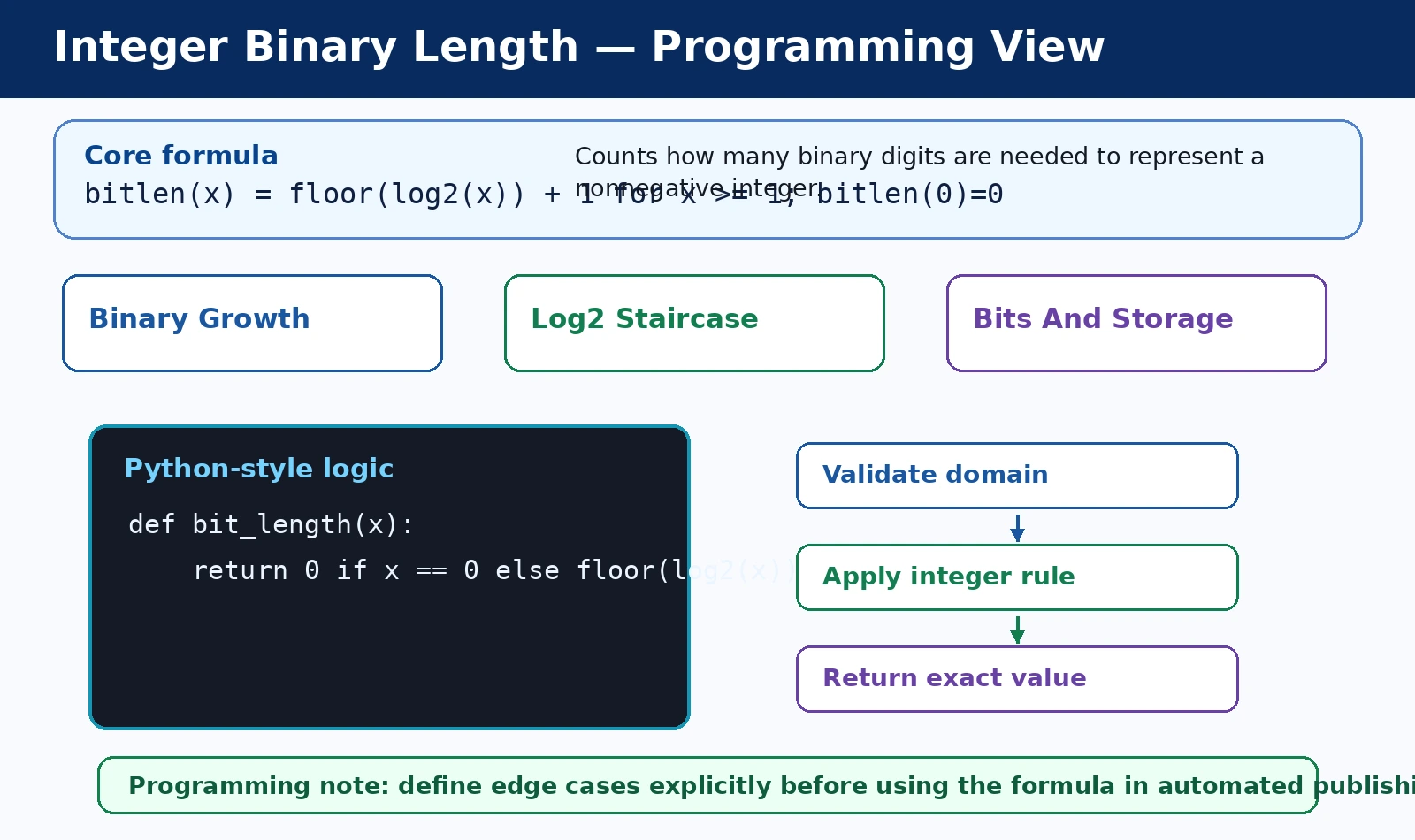

A unique challenge arises when calculating the binary length for the integer zero. Mathematically, the logarithm of zero is undefined in standard real number systems. Therefore, the standard formula cannot be applied directly to this specific input value.

In computer science conventions, the binary length of zero is explicitly defined as 0. This definition reflects that no bits are required to represent a value of nothing in a non-signed context. It maintains consistency across various algorithmic implementations.

Some hardware architectures might treat zero as requiring one bit for a placeholder. However, in the context of pure bit-length definitions, zero remains the only exception. This distinction is crucial for programmers writing robust error-handling code.

Software libraries often include a conditional check to handle this edge case safely. By branching the logic, developers avoid mathematical errors like division by zero. This ensures that the function remains stable regardless of the input integer provided.

Understanding this exception is fundamental for accurate data structure design and optimization. When calculating total bitstreams, ignoring the zero case can lead to significant off-by-one errors. Proper handling ensures that the resulting bit-length is always logically sound.

Mathematical Foundations and Logarithms

Logarithmic Properties in Base 2

Logarithms are the inverse of exponentiation, making them ideal for binary calculations. Since binary is a base-2 system, ##\log_2## measures how many times two multiplies itself. This value directly correlates to the positions available in a binary word.

When ##x## is an exact power of two, the logarithm is an integer. For example, ##\log_2(8)## equals 3, indicating that eight is the third power. This relationship forms the skeleton of how we measure numerical magnitude in computing.

For numbers that are not powers of two, the logarithm results in a fraction. This fractional part represents the "distance" between the current number and the next power. It highlights the non-linear nature of bit growth in digital circuits.

Using base-2 logarithms allows for the categorization of numbers into bit-depth buckets. Every number between ##2^n## and ##2^{n+1}-1## shares the same binary length. This grouping is essential for defining data types like bytes and words.

The logarithmic approach is computationally more elegant than iterative bit counting for large numbers. It leverages the inherent properties of numbers to derive structural information quickly. This efficiency is a hallmark of advanced mathematical analysis in software engineering.

Floor Functions and Increments

The floor function, denoted by

, rounds any real number down to the nearest integer. In the bit-length formula, it strips away the fractional component of the logarithm. This step focuses purely on the completed powers of two.

By rounding down, we identify the highest bit position occupied by the number. For instance, a value of 6.7 would be floored to 6, signifying its base. This process simplifies complex floating-point values into manageable, discrete integer units.

The increment of +1 is the final step in the bit-length calculation. It accounts for the fact that binary indexing typically starts from zero in exponentiation. Adding one converts the highest index into a total count of bits.

Without the floor function, the formula would yield non-integer results which are physically impossible. Bits must exist as whole units within any digital storage medium or processor. Thus, the floor function bridges the gap between theory and reality.

We Also Published

Consistency in applying these functions ensures that the bit-length remains a monotonic property. As integers increase, the calculated length never decreases, mirroring the physical reality. This mathematical reliability is vital for sorting and indexing large datasets.

Computational Significance and Usage

Memory Allocation and Data Types

In low-level programming, knowing the integer binary length helps optimize memory usage. Instead of using a standard 32-bit integer, a programmer might choose a smaller type. This precision reduces the overall footprint of large arrays or databases.

Fixed-width data types often waste space when storing small numbers or flags. By calculating bit length, systems can implement variable-width storage solutions for efficiency. This is particularly important in embedded systems with very limited RAM resources.

Dynamic memory allocation strategies often rely on bit-length calculations to determine buffer sizes. If a value grows beyond its allocated bits, the system must resize. Predictive length calculations help minimize the frequency of these costly operations.

Hardware registers are designed around specific bit-lengths like 8, 16, or 64. Understanding the length of an integer ensures that data fits within these physical constraints. It prevents silent truncation and catastrophic data loss during arithmetic processing.

Modern compilers use bit-length analysis to perform various optimizations during the build process. They can replace expensive division operations with faster bitwise shifts if the length is known. This leads to significantly faster execution times for mathematical software.

Role in Data Compression

Data compression algorithms frequently use integer binary length to encode information more densely. By only storing the necessary bits, algorithms like Huffman coding reduce file sizes. This technique is the backbone of modern internet communication protocols.

Variable-length coding assigns fewer bits to smaller or more frequent integer values. The binary length formula provides the theoretical limit for how small these codes can be. It guides the design of efficient prefix-free codes in transmission.

In image and video compression, pixel values are often stored using their minimal bit-length. This reduces the bandwidth required to stream high-definition content across global networks. Every bit saved contributes to a smoother and faster user experience.

Entropy coding relies on the relationship between probability and bit-length to achieve high ratios. The more predictable a number is, the shorter its optimal binary representation becomes. Mathematical length serves as the baseline for these complex transformations.

Without the ability to calculate and manipulate bit-lengths, modern data storage would be impossible. The sheer volume of global data requires the extreme efficiency provided by bit-level optimization. It remains a cornerstone of information theory and practice.

Practical Implementation and Examples

Step-by-Step Calculation for x=13

To illustrate the concept, let us calculate the integer binary length for the number 13. First, we determine the base-2 logarithm of 13, which is approximately 3.7. This value indicates that 13 lies between ##2^3## and ##2^4##.

Next, we apply the floor function to our result,

. This step tells us that the highest power of two contained in 13 is three. It effectively discards the remainder that doesn't reach the next power.

Finally, we add one to the result:

. This indicates that the integer binary length of 13 is four bits. We can verify this by looking at the binary representation of 13.

In binary, 13 is written as 1101, which clearly consists of four individual digits. The formula correctly predicted the length without needing to perform the actual conversion. This demonstrates the power of the logarithmic approach in mathematics.

This example highlights how the formula works for any arbitrary positive integer value. Whether the number is small like 13 or massive, the logic remains identical. It provides a universal tool for bit-depth analysis across all computing fields.

Algorithmic Efficiency in Programming

In software development, calculating binary length is often done using built-in CPU instructions. Many modern processors include a "Count Leading Zeros" (CLZ) command for this purpose. This hardware-level support makes the calculation extremely fast and efficient.

When hardware support is unavailable, programmers use bit-shifting loops or lookup tables. A common method involves shifting the number right until it reaches zero while incrementing. This iterative process effectively counts the bits one by one manually.

Bit-length functions are a staple in languages like Python, C++, and Java. For instance, Python’s int.bit_length() method provides this value directly to the user. These abstractions simplify the developer's task while maintaining high performance under the hood.

Efficiency in this calculation is critical for cryptographic algorithms and large-scale simulations. When processing billions of integers, even minor delays in bit-length checks can accumulate. High-performance computing relies on optimized mathematical implementations of these basic definitions.

Ultimately, the integer binary length is more than just a simple math formula. It is a bridge between the abstract world of numbers and physical hardware. Mastering its calculation is a prerequisite for any serious study of computer science.

From our network :

- Vite 6/7 'Cold Start' Regression in Massive Module Graphs

- 10 Physics Numerical Problems with Solutions for IIT JEE

- AI-Powered 'Precision Diagnostic' Replaces Standard GRE Score Reports

- Mastering DB2 LUW v12 Tables: A Comprehensive Technical Guide

- https://www.themagpost.com/post/analyzing-trump-deportation-numbers-insights-into-the-2026-immigration-crackdown

- 98% of Global MBA Programs Now Prefer GRE Over GMAT Focus Edition

- https://www.themagpost.com/post/trump-political-strategy-how-geopolitical-stunts-serve-as-media-diversions

- Mastering DB2 12.1 Instance Design: A Technical Deep Dive into Modern Database Architecture

- EV 2.0: The Solid-State Battery Breakthrough and Global Factory Expansion

RESOURCES

- If a 32 bit Int's max value is 2,147,483,647, why is it only 31 ... - Reddit

- python - How to efficiently determine the binary length of an integer?

- Find length of size of integer in binary form - Arduino Forum

- (Java) Specify number of bits (length) when converting binary ...

- What's the quickest way to get the length of an integer?

- Built-in Types — Python 3.14.5 documentation

- Binary and Varbinary (Transact-SQL) - SQL Server - Microsoft Learn

- Binary functions and operators — Trino 480 Documentation

- binary — stdlib v7.3 - Erlang

- 18: 9.5. Binary String Functions and Operators - PostgreSQL

- How To Solve Binary Gap - Manny - Medium

- The Type Hierarchy — SQLAlchemy 2.1 Documentation

- Data Types - Spark 4.1.1 Documentation

- Binary to integer (no parsing) - Questions / Help - Elixir Forum

- CAST specification - Db2 SQL - IBM

0 Comments